Hiya, of us, welcome to TechCrunch’s common AI e-newsletter. In order for you this in your inbox each Wednesday, enroll here.

You would possibly’ve seen we skipped the e-newsletter final week. The explanation? A chaotic AI information cycle made much more pandemonious by Chinese AI company DeepSeek’s sudden rise to prominence, and the response from virtually ever nook of business and authorities.

Luckily, we’re again on observe — and never a second too quickly, contemplating final weekend’s newsy developments from OpenAI.

OpenAI CEO Sam Altman stopped over in Tokyo to have an onstage chat with Masayoshi Son, the CEO of Japanese conglomerate SoftBank. SoftBank is a significant OpenAI investor and partner, having pledged to help fund OpenAI’s large information heart infrastructure undertaking within the U.S.

So Altman in all probability felt he owed Son just a few hours of his time.

What did the 2 billionaires discuss? A number of abstracting work away by way of AI “brokers,” per secondhand reporting. Son mentioned his firm would spend $3 billion a yr on OpenAI merchandise and would group up with OpenAI to develop a platform, “Cristal [sic] Intelligence,” with the aim of automating tens of millions of historically white-collar workflows.

“By automating and autonomizing all of its duties and workflows, SoftBank Corp. will rework its enterprise and providers, and create new worth,” SoftBank mentioned in a press release Monday.

I ask, although, what the standard employee is to consider all this automating and autonomizing?

Like Sebastian Siemiatkowski, the CEO of fintech Klarna, who typically brags about AI replacing humans, Son appears to be of the opinion that agentic stand-ins for employees can solely precipitate fabulous wealth. Glossed over is the price of the abundance. Ought to the widespread automation of jobs come to go, unemployment on an enormous scale seems the likeliest outcome.

It’s discouraging that these on the forefront of the AI race — firms like OpenAI and traders like SoftBank — select to spend press conferences portray an image of automated companies with fewer employees on the payroll. They’re companies, in fact — not charities. And AI improvement doesn’t come low cost. However maybe folks would trust AI if these guiding its deployment confirmed a bit extra concern for his or her welfare.

Meals for thought.

Information

Deep research: OpenAI has launched a brand new AI “agent” designed to assist folks conduct in-depth, advanced analysis utilizing ChatGPT, the corporate’s AI-powered chatbot platform.

O3-mini: In different OpenAI information, the corporate launched a brand new AI “reasoning” mannequin, o3-mini, following a preview final December. It’s not OpenAI’s strongest mannequin, however o3-mini boasts improved effectivity and response pace.

EU bans risky AI: As of Sunday within the European Union, the bloc’s regulators can ban using AI programs they deem to pose “unacceptable danger” or hurt. That features AI used for social scoring and subliminal promoting.

A play about AI “doomers”: There’s a brand new play out about AI “doomer” tradition, loosely based mostly on Sam Altman’s ousting as CEO of OpenAI in November 2023. My colleagues Dominic and Rebecca share their ideas after watching the premiere.

Tech to boost crop yields: Google’s X “moonshot manufacturing facility” this week introduced its newest graduate. Heritable Agriculture is a data- and machine learning-driven startup aiming to enhance how crops are grown.

Analysis paper of the week

Reasoning fashions are higher than your common AI at fixing issues, notably science- and math-related queries. However they’re no silver bullet.

A new study from researchers at Chinese company Tencent investigates the problem of “underthinking” in reasoning fashions, the place fashions prematurely, inexplicably abandon probably promising chains of thought. Per the examine’s outcomes, “underthinking” patterns are inclined to happen extra ceaselessly with more durable issues, main fashions to change between reasoning chains with out arriving at solutions.

The group proposes a repair that employs a “thought-switching penalty” to encourage fashions to “totally” develop every line of reasoning earlier than contemplating alternate options, boosting fashions’ accuracy.

Mannequin of the week

A group of researchers backed by TikTok proprietor ByteDance, Chinese language AI firm Moonshot, and others launched a brand new open mannequin able to producing comparatively high-quality music from prompts.

The mannequin, known as YuE, can output a track up to some minutes in size full with vocals and backing tracks. It’s below an Apache 2.0 license, which means the mannequin can be utilized commercially with out restrictions.

There are downsides, nevertheless. Operating YuE requires a beefy GPU; producing a 30-second track takes six minutes with an Nvidia RTX 4090. Furthermore, it’s not clear if the mannequin was educated utilizing copyrighted information; its creators haven’t mentioned. If it seems copyrighted songs had been certainly within the mannequin’s coaching set, customers may face future IP challenges.

Seize bag

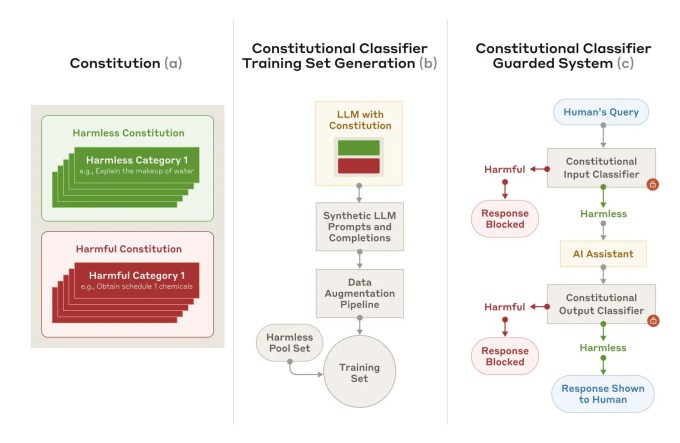

AI lab Anthropic claims that it has developed a way to extra reliably defend in opposition to AI “jailbreaks,” the strategies that can be utilized to bypass an AI system’s security measures.

The approach, Constitutional Classifiers, depends on two units of “classifier” AI fashions: an “enter” classifier and an “output” classifier. The enter classifier appends prompts to a safeguarded mannequin with templates describing jailbreaks and different disallowed content material, whereas the output classifier calculates the probability {that a} response from a mannequin discusses dangerous data.

Anthropic says that Constitutional Classifiers can filter the “overwhelming majority” of jailbreaks. Nevertheless, it comes at a value. Every question is 25% extra computationally demanding, and the safeguarded mannequin is 0.38% much less prone to reply innocuous questions.

Trending Merchandise