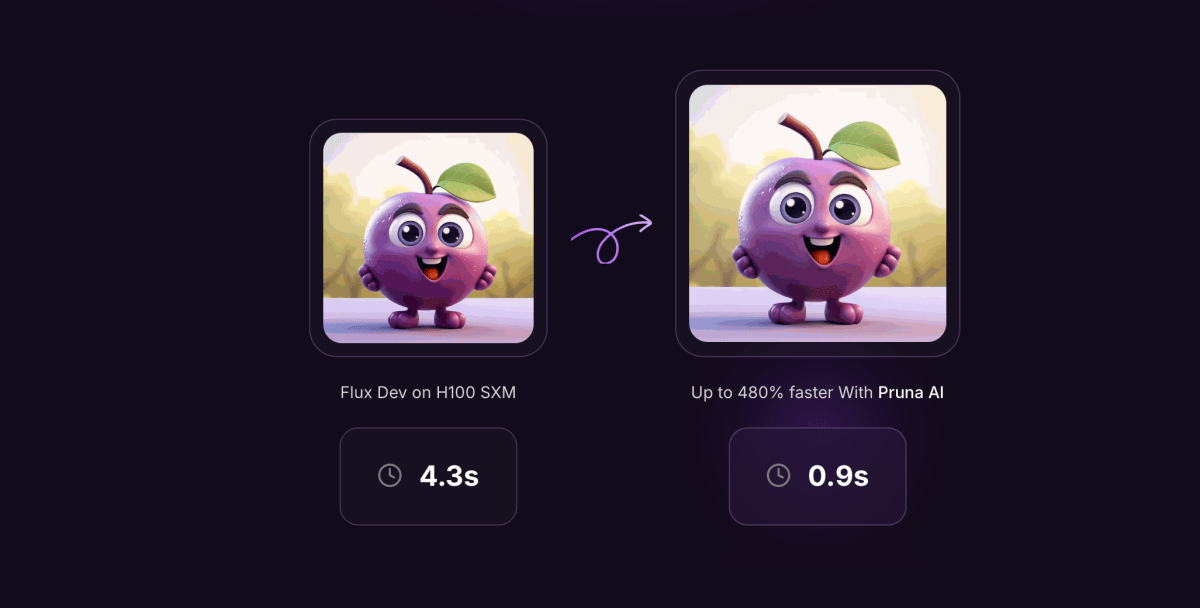

Pruna AI, a European startup that has been engaged on compression algorithms for AI fashions, is making its optimization framework open source on Thursday.

Pruna AI has been making a framework that applies a number of effectivity strategies, resembling caching, pruning, quantization and distillation, to a given AI mannequin.

“We additionally standardize saving and loading the compressed fashions, making use of mixtures of those compression strategies, and in addition evaluating your compressed mannequin after you compress it,” Pruna AI co-fonder and CTO John Rachwan instructed TechCrunch.

Particularly, Pruna AI’s framework can consider if there’s vital high quality loss after compressing a mannequin and the efficiency good points that you simply get.

“If I have been to make use of a metaphor, we’re just like how Hugging Face standardized transformers and diffusers — methods to name them, methods to save them, load them, and many others. We’re doing the identical, however for effectivity strategies,” he added.

Large AI labs have already been utilizing numerous compression strategies already. As an example, OpenAI has been counting on distillation to create quicker variations of its flagship fashions.

That is doubtless how OpenAI developed GPT-4 Turbo, a quicker model of GPT-4. Equally, the Flux.1-schnell picture era mannequin is a distilled model of the Flux.1 mannequin from Black Forest Labs.

Distillation is a way used to extract information from a big AI mannequin with a “teacher-student” mannequin. Builders ship requests to a trainer mannequin and report the outputs. Solutions are typically in contrast with a dataset to see how correct they’re. These outputs are then used to coach the scholar mannequin, which is skilled to approximate the trainer’s habits.

“For large corporations, what they normally do is that they construct these things in-house. And what yow will discover within the open supply world is normally primarily based on single strategies. For instance, let’s say one quantization methodology for LLMs, or one caching methodology for diffusion fashions,” Rachwan stated. “However you can not discover a device that aggregates all of them, makes all of them simple to make use of and mix collectively. And that is the massive worth that Pruna is bringing proper now.”

Whereas Pruna AI helps any type of fashions, from massive language fashions to diffusion fashions, speech-to-text fashions and pc imaginative and prescient fashions, the corporate is focusing extra particularly on picture and video era fashions proper now.

A few of Pruna AI’s present customers embrace Scenario and PhotoRoom. Along with the open supply version, Pruna AI has an enterprise providing with superior optimization options together with an optimization agent.

“Essentially the most thrilling function that we’re releasing quickly will probably be a compression agent,” Rachwan stated. “Principally, you give it your mannequin, you say: ‘I need extra pace however don’t drop my accuracy by greater than 2%.’ After which, the agent will simply do its magic. It can discover the perfect mixture for you, return it for you. You don’t must do something as a developer.”

Pruna AI expenses by the hour for its professional model. “It’s just like how you’ll consider a GPU if you lease a GPU on AWS or any cloud service,” Rachwan stated.

And in case your mannequin is a essential a part of your AI infrastructure, you’ll find yourself saving some huge cash on inference with the optimized mannequin. For instance, Pruna AI has made a Llama mannequin eight occasions smaller with out an excessive amount of loss utilizing its compression framework. Pruna AI hopes its prospects will take into consideration its compression framework as an funding that pays for itself.

Pruna AI raised a $6.5 million seed funding spherical just a few months in the past. Buyers within the startup embrace EQT Ventures, Daphni, Motier Ventures and Kima Ventures.

Trending Merchandise